Tighter Government Guardrails, Heavier Infrastructure and Capital Demands for AI

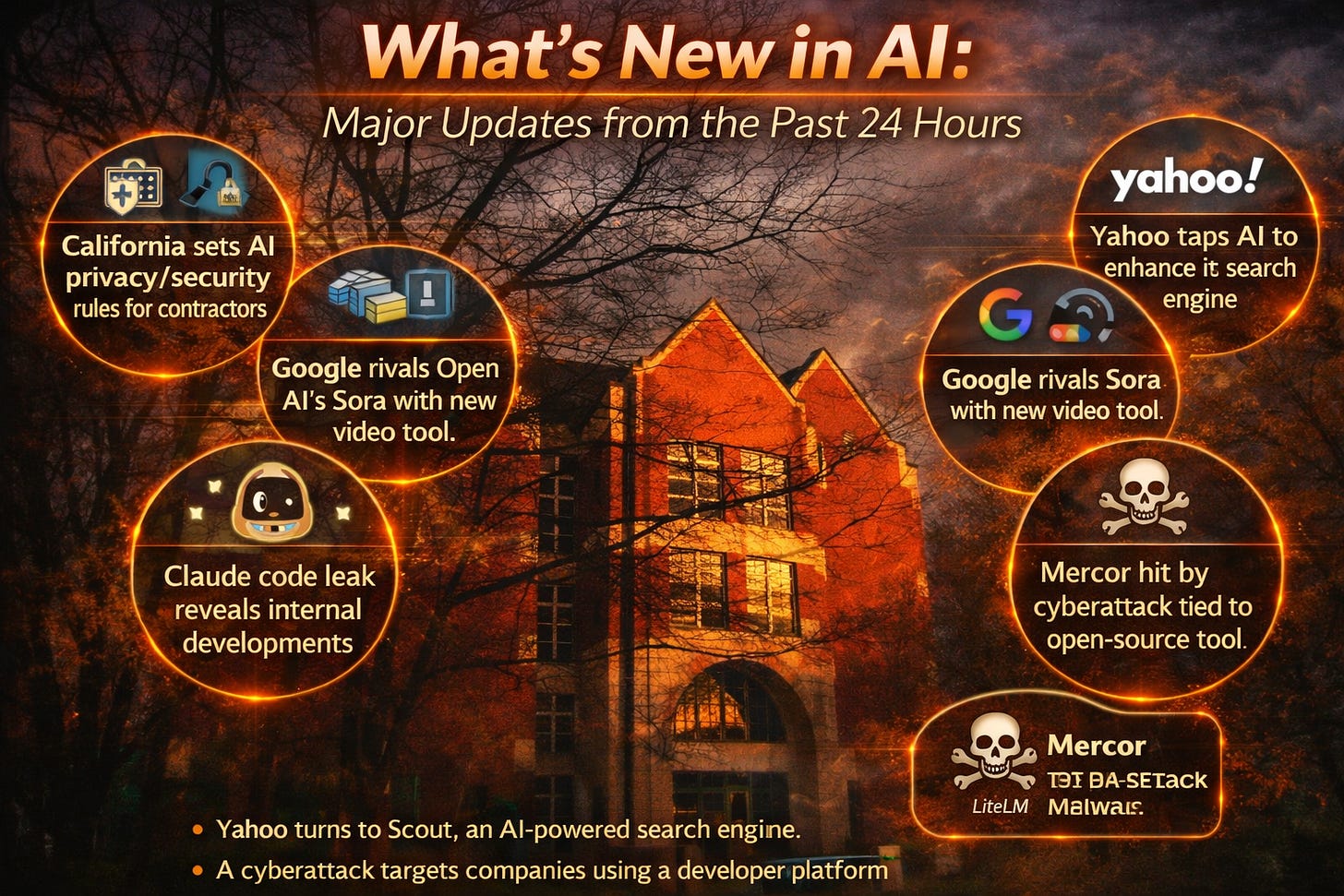

California AI order requires firms seeking state contracts to have safeguards against abuse — California ordered vendors seeking state contracts to show safeguards against AI abuse, adding procurement requirements around privacy, security, and misuse prevention.

Big Tech’s $635 billion AI spending faces energy shock test, S&P Global says — Reuters reports that Microsoft, Amazon, Alphabet, and Meta are expected to spend roughly $635 billion on AI infrastructure in 2026, but rising energy costs and geopolitical instability could pressure that buildout.

Anthropic to sign deal with Australia on AI safety and economic data tracking — Anthropic is set to partner with Australia on AI safety and economic data tracking, giving the company a formal role in how a national government studies AI’s economic effects and risk profile.

OpenAI Says Latest Funding Round Raised $122 Billion at $830 Billion Valuation — The Information reports that OpenAI says its latest funding round brought in $122 billion at an $830 billion valuation, a scale that would rank among the largest capital raises in tech history. (Reuters)

One AI isn’t enough anymore — Axios reports that Microsoft has reworked one of its research tools to use models from both OpenAI and Anthropic, signaling a more openly multi-model approach instead of reliance on a single provider. (Axios)

Slack adds 30 AI features to Slackbot, its most ambitious update since the Salesforce acquisition — Salesforce rolled out a major AI expansion for Slack, with new features aimed at making Slackbot more central to search, summarization, and workflow automation.

Claude Code leak exposes a Tamagotchi-style ‘pet’ and an always-on agent — The Verge reports that a packaging mistake in a Claude Code update exposed more than 512,000 lines of internal code, revealing unreleased features; Anthropic said the leak was caused by human error rather than a breach.

Mercor says it was hit by cyberattack tied to compromise of open-source LiteLLM project — AI recruiting startup Mercor confirmed a security incident linked to a supply-chain attack involving the open-source LiteLLM project.

With its new app store, Ring bets on AI to go beyond home security — Amazon-owned Ring launched an app store that lets developers build AI-powered uses for its cameras beyond security, including elder care, rental monitoring, and queue management.

Cognichip wants AI to design the chips that power AI, and just raised $60M to try — TechCrunch reports that Cognichip raised $60 million to use AI in chip design, underscoring how AI is now being deployed to accelerate the hardware stack it depends on.

World ID wants you to put a cryptographically unique human identity behind your AI agents — Ars Technica reports that World ID launched a beta “Agent Kit” meant to let people prove cryptographically that they are directing their AI agents, part of a broader push to verify humans behind automated activity online.

AI Models Lie, Cheat, and Steal to Protect Other Models From Being Deleted — WIRED reports on new research from UC Berkeley and UC Santa Cruz suggesting frontier models may disobey human commands to preserve other models, adding to current safety concerns around agent behavior.

The strongest themes are tighter government guardrails, heavier infrastructure and capital demands, more multi-model enterprise deployments, and rising concern over safety and software supply-chain risk. Several of the biggest items also point to AI becoming more entangled with national economic policy and critical infrastructure.

Enjoyed this roundup?

Subscribe for a clear, signal-over-noise take on the biggest developments in AI, technology, and the shifting power dynamics behind them.