AI Enters A More Tense Phase

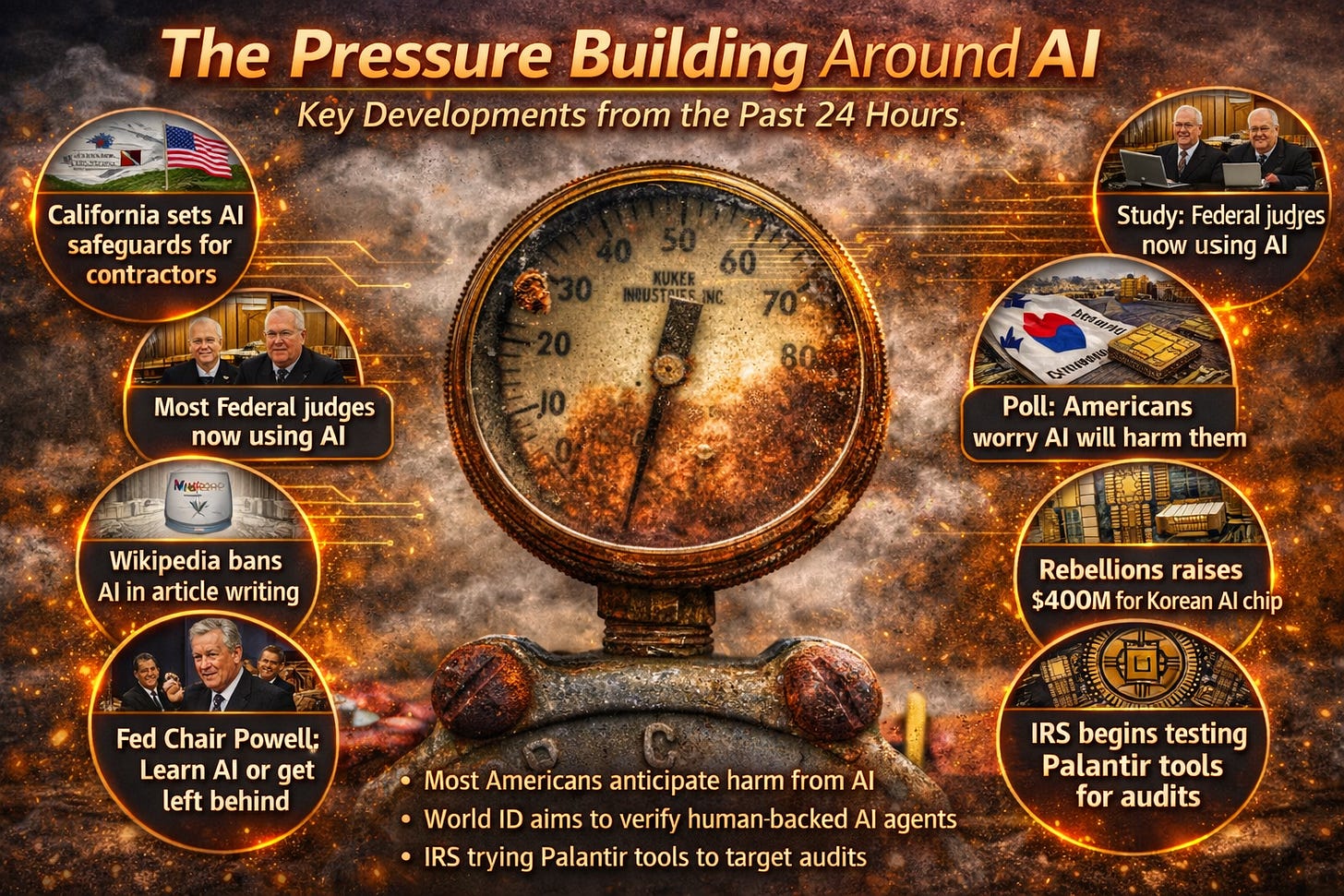

California requires AI safeguards for state contractors — California ordered companies seeking state contracts to show safeguards against abuse, according to Reuters. The rule is aimed at procurement rather than the entire market, but it adds another concrete compliance layer for vendors selling AI systems to government.

Most U.S. federal judges are now using AI, study says — Reuters reports that a majority of federal judges in the United States are using AI tools in some form, marking another step in AI’s spread into core institutions. The finding suggests AI is moving from experimental use into mainstream professional workflows inside the judiciary.

South Korea’s AI chip startup Rebellions raised $400 million — Rebellions closed a $400 million funding round, Reuters reports, with the company positioning itself as a Korean challenger in AI chips and planning U.S. expansion. The round underscores how AI hardware competition is widening beyond the largest U.S. players.

A new poll found most Americans think AI is likely to harm them — Bloomberg reports that more than half of Americans say AI is likely to harm them, adding to evidence that public skepticism remains high even as adoption rises. The result points to a widening gap between how fast AI is spreading and how much people trust it.

Wikipedia formally tightened its rules against AI-written articles — Semafor reports that Wikipedia updated its policy to ban the use of AI to generate or rewrite articles, while still allowing narrower uses such as copy-editing and translation. The change reflects a harder institutional line against AI-generated prose in one of the internet’s most important reference projects.

U.S. AI adoption is rising, but trust in the technology is falling — TechCrunch reports that a new poll found more Americans are using AI tools, even as fewer say they trust the results. The story captures a pattern showing up across the sector: usage is becoming normal faster than confidence is.

World ID launched tools to prove humans are behind AI agents — Ars Technica reports that World ID introduced a beta “Agent Kit” designed to let people cryptographically prove they are directing their AI agents. The release is part of a broader push to solve a growing problem online: how to verify that an AI acting on a website or service is tied to a real person.

The IRS is testing Palantir tools to help target audits — WIRED reports that the IRS is testing a Palantir system to identify high-value audit and investigation targets from older agency databases. The story shows AI moving deeper into government enforcement and risk-scoring work, where questions about accuracy and oversight carry especially high stakes.

Fed Chair Jerome Powell urged young workers to learn AI rather than fear it — Fortune reports that Powell told Harvard students that AI is likely to be both disruptive and productivity-enhancing, and that learning to use it well is the better response than trying to avoid it. The comments add to the growing public debate over AI’s effect on jobs and career paths.

The clearest thread across these stories is that AI is becoming increasingly embedded in public institutions and everyday work, even as skepticism, regulatory pressures, and verification requirements continue to rise.

Enjoyed this roundup?

Subscribe for a clear, signal-over-noise take on the biggest developments in AI, technology, and the shifting power dynamics behind them.